Article Summary

Every major technology shift forces the design industry to revisit the same fundamental question: what is design? From desktop publishing in the 80s to AI today, the cycle of reckoning has been accelerating, and the answers keep expanding. This article traces that pattern through Frank Chimero's decade of writing, the evolution from Design Thinking to Critical Design to Xenodesign, Bruno Latour's Actor-Network Theory, and Duchamp's readymade, arguing that AI isn't just another tool but an active participant in the creative process that demands an entirely new framing. The artifact was always a byproduct of the thinking, never the thinking itself, and the cycle has finally gotten tight enough to make that impossible to ignore.

Key Takeaways

- The question "what is design?" resurfaces every time a new technology commoditizes a layer of production. The cycle used to reset every decade. Now it resets every six months.

- Frank Chimero has been documenting this cycle since 2013 across four major pieces, each responding to a different technology but asking the same underlying question.

- Design is amorphous. It absorbs whatever gets close to it: strategy, code, content, research, operations. That's why the definition never stays settled.

- Bruno Latour's Actor-Network Theory, which treats human and non-human actors as equal participants in a network, anticipated the exact dynamic we're now navigating with AI.

- Previous tools were deterministic. AI is not. It suggests directions before intent is fully formed, nudges taste, and makes certain outcomes feel inevitable because they're easy to arrive at. We're using an old lens for a fundamentally new kind of tool.

- AI isn't replacing practitioners. It's replacing the parts of the role that practitioners absorbed along the way: production, QA, asset management, and documentation.

- If design were only ever the artifact, then AI is a threat. If it was the judgment, the framing, the intent behind the decisions, then this moment is a needed correction.

Full Article

We've been asking "what is design?" for longer than most people in this industry have been alive.

Desktop publishing asked it in the 80s when anyone with a Mac and PageMaker could suddenly set type. The internet asked it again in the mid-90s when the medium went from paper to screen, and nobody knew the rules. Flash asked it. Mobile asked it. Design systems asked it. And now AI is asking it, louder and faster than anything before.

This isn't a new crisis. It's an old question on a tighter loop.

The Cycle

The pattern is economic. Every time a new technology commoditizes a layer of production, the people working in that layer have to reckon with what they actually do. Typographers had to reckon with it when anyone could set type on a Mac. Web designers had to reckon with it when Squarespace shipped. And now interface designers are reckoning with it because AI can generate screens, a shift that echoes across the future of the design industry.

Each time, the reckoning follows the same arc: the new tool arrives, the existing practitioners panic, the definition of the discipline expands to accommodate what the tool can't do, and eventually everyone settles into a new equilibrium. Until the next tool shows up.

Frank Chimero has been documenting this in real time for over a decade. In "What Screens Want" (2013), the industry was still dragging physical metaphors into digital interfaces, leather textures and drop shadows, as if the screen needed to pretend it was something else. By "The Web's Grain" (2015), the argument had sharpened: every material has affordances, and fighting the natural character of the web only produces what he called "bicycle bear websites." Impressive that you can teach a bear to ride a bicycle. But that's not what bears are supposed to do.

Then came "Everything Easy Is Hard Again" (2018), where the question shifted from medium to workflow. After three years away from client work, Chimero returned to a landscape of new build tools, new frameworks, and new abstractions that made him ask whether 20 years of web experience is really 20 years, or five years repeated four times.

That observation hits harder when you notice the repeats are getting faster.

His most recent piece, "Beyond the Machine" (2025), reframes AI not as a tool or a threat but as an instrument. You use a tool. You play an instrument. The distinction matters: instruments require a performance, a touch, instincts that can't be automated. Four different technologies. The same question underneath all of them: what part of design survives when the tools change?

The Expanding Definition

Part of what makes the question so hard to answer is that design keeps absorbing new territory. It's amorphous. As the world changes, design pulls in whatever is adjacent and makes it part of the discipline. Strategy gets close, design absorbs it. Code gets close, design absorbs it. Content, accessibility, operations, facilitation—all consumed.

The industry has tried to name this expansion as it happens. In the 2000s and 2010s, we had "Design Thinking," the era when business tried to learn from design's methods and design tried to learn from business. IDEO and the Stanford d.school built frameworks for empathy, ideation, and prototyping that could be taught to anyone. It was design's vocabulary crossing over into boardrooms and MBA programs. For a while, it looked like the answer to "what is design?" was "a process anyone can follow."

Then came Critical Design, pioneered by Anthony Dunne and Fiona Raby at the Royal College of Art. Their argument was that design didn't have to solve problems at all. It could ask questions instead. It could use speculative proposals to challenge assumptions about technology, consumption, and culture. Critical Design reframed the discipline not as a service function but as a form of inquiry. Design as critique, not design as solution.

And now there's Xenodesign, a term coined by Johanna Schmeer and published through MIT. Xenodesign pushes even further. It argues that human-centered design, for all its merits, has partly contributed to the environmental and social problems it was supposed to help solve by centering everything on human needs and desires. Xenodesign proposes designing with and for non-human entities: ecologies, bacteria, soil, and artificial intelligences. All are treated as actors with agency. Not as tools to be used, but as participants in the system.

Which brings us to Bruno Latour.

The Network Was Always There

Latour's Actor-Network Theory (ANT), developed in the 1980s, proposed something that sounded abstract at the time but feels obvious now: the world is not made up of humans acting upon inert objects. It's a network of human and non-human actors, all participating, all shaping outcomes. A laboratory isn't just scientists doing experiments. It's scientists, equipment, funding structures, institutional politics, and published papers, all acting on each other. None of them are passive—none of them are neutral.

From the ANT viewpoint, objects are designed to shape human action and influence decisions. The design of a thing mediates human relationships and can impact morality, ethics, and politics.

Read that again in the context of AI.

We are now designing alongside non-human actors that generate artifacts, write code, produce layouts, and make suggestions. The network of actors in any design process has expanded, and the human designer's role within it is being renegotiated in real time. Latour's framework, written decades before generative AI existed, describes exactly this dynamic. The question was never "human or machine." It was always "what role does each actor play in the network, and who decides?"

This is where Xenodesign, Critical Design, and the "what is design?" question all converge. The discipline has spent decades expanding its boundaries outward. It absorbed business strategy through Design Thinking. Cultural critique through Critical Design. Non-human agency through Xenodesign. And now, with AI, the non-human actor isn't theoretical. It's sitting in the workflow, generating outputs, and waiting for someone with judgment to decide what's good—much like modern product design and development services that integrate AI into end-to-end workflows.

Not a Hammer

Here's where it gets interesting, and where I think most of the current debate goes wrong.

Tools aren't neutral. They encode priorities. What's easy to build gets built more often, whether it matters or not. Over time, what we make starts to reflect the limits and assumptions of the tools themselves. Chimero's "grain" argument applies here too: every medium pushes you toward certain outcomes unless you actively resist.

But the tools of the past were largely deterministic. The machine behaved in predictable ways. You learned the tool, you mastered the tool, and the tool did what you told it. Gen-AI doesn't work like that. It's non-deterministic. It suggests directions before your intent is fully formed. It nudges taste. It makes certain outcomes feel inevitable simply because they're easy to arrive at.

Marcel Duchamp figured out something adjacent to this a century ago. The "readymade" wasn't about laziness. It was about context, selection, and declaring meaning. Take something that already exists, place it somewhere new, and force a different reading. In that sense, we've been living in an era of conceptual readymades for a long time. We remix. We reinterpret. We let ideas simmer and collide. That part isn't new.

What's new is that the tool is doing the remixing with us. Not as a passive instrument, but as an active participant. And we keep arguing about it as if it's a new type of hammer.

That's the blind spot. We're using an old lens, one built for predictable, obedient tools, to frame something that behaves very differently. Until we update that framing, we'll keep debating the wrong problems and missing where the real work has shifted.

The Tightening Loop

What's different about this moment isn't the question. It's the cycle speed.

Desktop publishing gave designers a decade to adjust. The web gave them maybe five years before the next disruption. Mobile compressed it further. Design systems further still. And now AI is forcing a reckoning every six months. The cycle is getting so tight that the profession can't settle into a new equilibrium before the next wave hits. There's no time to get comfortable before the ground shifts again.

I had a conversation recently with an engineer who told me that six months ago, Claude wasn't good enough. Then overnight, it got good. Now it writes 90% of his code.

But here's the part people skip: this is a senior engineer. Someone with years of judgment about architecture, tradeoffs, and what "good" means in context. The tool didn't replace the practitioner. It replaced the parts of the role that the practitioner had absorbed along the way. The boilerplate. The scaffolding. The mechanical work that accumulated around the actual job over years of being the person closest to the keyboard.

Design roles have the same bloat. You start in production: layouts, assets, redlines, QA, and documentation. Over time, you grow into more abstract work. Problem framing. Stakeholder alignment. Strategy. But the production never falls off. You just keep stacking. The strategic thinking gets added on top of the pixel-pushing, and nobody takes the pixel-pushing away. Full‑stack digital design services try to span that entire spectrum. AI is clawing back some of that production layer. And what's left looks a lot more like design as an integrated, AI‑driven process that some designers have been trying to grow into for their entire careers.

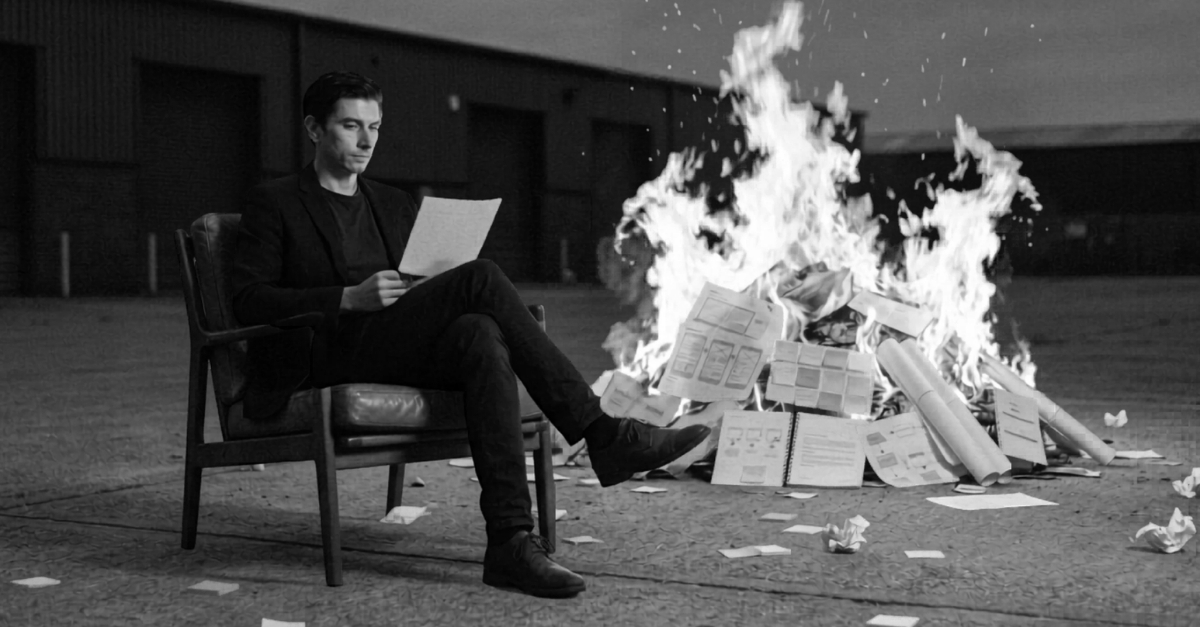

The Death Grip and the Correction

Some people find that exciting. Some people are holding a death grip on the idea that pushing pixels is what makes them a designer. I understand why. The artifact is tangible. You can point to it. It filled your portfolio and got you the job. When someone tells you the thing you've been optimizing for your entire career might not be the thing that matters most, that lands hard.

But the artifact was always a byproduct of the thinking. The screen was proof that you did the work. It was never the work itself.

Some designers are tired of the hype. Tired of being told every six months that their profession is dead, then alive, then dead again. Some have landed on "generative AI actually sucks" as a resting position. I understand the impulse. But I think that's a reaction to the volume, not the technology. And in most cases, the people saying it doesn't work haven't invested the time to learn how to use it well. The engineer I spoke with didn't wake up one morning and suddenly know how to use AI to write 90% of his code. He brought decades of skill to the prompt. The tool amplified his judgment. Without the judgment, it would have amplified nothing.

Opting out of the conversation doesn't slow the cycle. And choosing a side, AI evangelist or AI skeptic, misses the point. The technology isn't the story. The question it's forcing is.

If design was only ever the artifact, the Figma file, the screen, the deliverable, then yes, this moment looks like a threat. But if design is something deeper: judgment, framing, understanding human tension, shaping meaning, making choices with intent, then this isn't the death of design.

It's a needed correction. One that's been building for 40 years. What makes this moment different is that it’s not just Design that is questioning its identity; it’s a growing list of roles and industries—all at the same time.

Design is dead. Long live design.

Frequently Asked Questions (FAQ)

Is AI going to replace designers?

AI is replacing parts of the design role that were never really design to begin with: production tasks, asset management, documentation, and mechanical execution. The core of the discipline, problem framing, judgment, and intent, still requires human practitioners.

What is the "what is design?" cycle?

Every time a major technology commoditizes a layer of production, the design profession is forced to re-examine what it actually does. This has happened with desktop publishing, the internet, mobile, design systems, and now AI. The cycle is accelerating.

How is AI different from previous design tools?

Previous tools were deterministic: the machine did what you told it. AI is non-deterministic. It suggests directions before intent is fully formed, nudges taste, and makes certain outcomes feel inevitable because they're easy to arrive at. It's an active participant in the creative process, not a passive instrument. We need a new framing to understand it.

How does Bruno Latour's Actor-Network Theory relate to design and AI?

Latour proposed that the world is a network of human and non-human actors, all shaping outcomes together. With AI now generating artifacts and influencing decisions alongside human designers, his framework describes exactly the dynamic the profession is navigating today.

What is Xenodesign?

Coined by Johanna Schmeer and published through MIT, Xenodesign challenges human-centered design by proposing that non-human entities (ecologies, AI, biological systems) should be treated as actors with agency in the design process. It draws from speculative design and speculative realism.

What is Critical Design?

Pioneered by Anthony Dunne and Fiona Raby at the Royal College of Art, Critical Design uses speculative proposals to challenge assumptions about technology and culture. Rather than solving problems, it reframes design as a form of inquiry and critique.

Why are some designers resistant to AI?

Many designers have built their identity and career around the artifact: the Figma file, the polished screen, the deliverable. When AI threatens that specific layer of production, it can feel like an existential threat. The resistance is often a reaction to hype cycle fatigue rather than the technology itself.